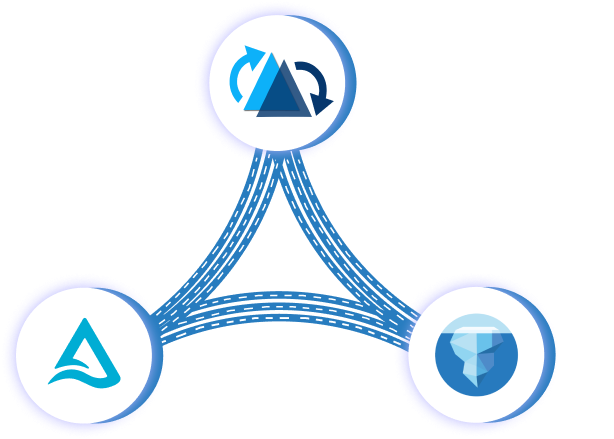

Apache XTable™ reads the existing metadata of your table and writes out metadata for one or more other table formats by leveraging the existing APIs provided by each table format project. The metadata will be persisted under a directory in the base path of your table (_delta_log for Delta, metadata for Iceberg, and .hoodie for Hudi). This allows your existing data to be read as though it was written using Delta, Hudi, or Iceberg. For example, a Spark reader can use spark.read.format(“delta | hudi | iceberg>”).load(“path/to/data”).

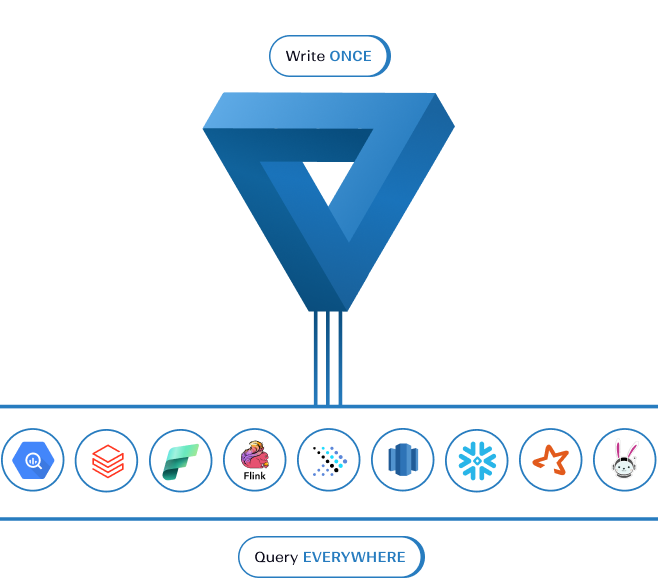

Apache XTable™ provides abstraction interfaces that allow omni-directional interoperability across Delta, Hudi, Iceberg, and any other future lakehouse table formats such as Apache Paimon. Apache XTable™ is a standalone github project that provides a neutral space for all the lakehouse table formats to constructively collaborate together.

Delta Lake Uniform is a one-directional conversion from Delta Lake to Apache Hudi or Apache Iceberg. Uniform is also governed inside the Delta Lake repo.

Apache XTable™ can be used to easily switch between any of the table formats or even benefit from more than one simultaneously. Some organizations use Apache XTable™ today because they have a diverse ecosystem of tools with polarized vendor support of table formats. Some users want lightning fast ingestion or indexing from Hudi and photon query accelerations of Delta Lake inside of Databricks. Some users want managed table services from Hudi, but also want write operations from Trino to Iceberg. Regardless of which combination of formats you need, Apache XTable™ ensures you can benefit from all 3 projects.

Yes, anywhere that Delta, Iceberg, or Hudi work, Apache XTable™ works.

1. Hudi and Iceberg MoR tables not supported

2. Delta Delete Vectors are not supported

3. Synchronized transaction timestamps

With Apache XTable™ you pick one primary format and one or more secondary formats. The write operations with the primary format work as normal. Apache XTable™ than translates the metadata from the primary format to the secondaries. When committing the metadata of the secondary formats, the timestamp of the commit will not be the exact same timestamp as shown in the primary.

Come check out the project on Github and add a little star. There are some low hanging fruit features, bugs, and documentation that can be added. Reach out directly to any of the contributors on Github to ask for help.

Follow Apache XTable™ (Incubating) community channels on Linkedin and Twitter. Subscribe to the mailing list by sending an email to (dev-subscribe@xtable.apache.org). Follow the project on Github or reachout directly to any of the Github contributors to learn more.